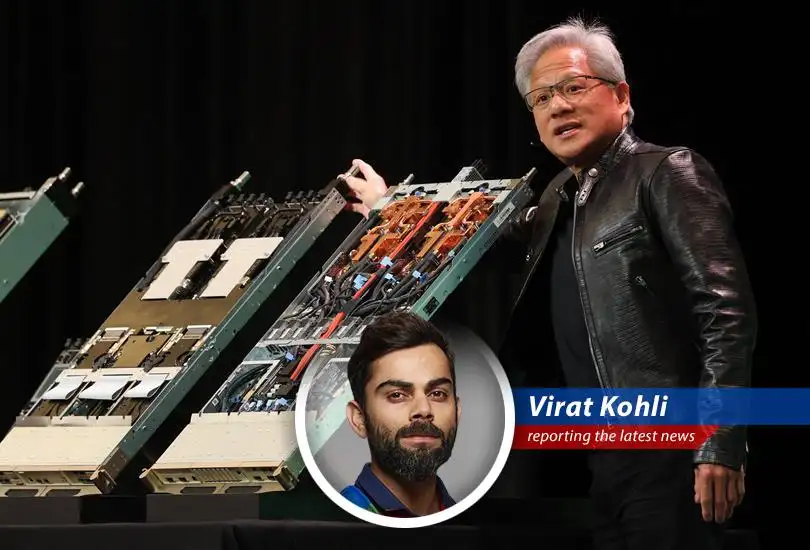

- Nvidia's CEO Jensen Huang indicates the company's $30 billion investment in OpenAI might be its last before a potential public offering.

- Huang suggests that a previously discussed $100 billion investment in OpenAI is "not in the cards," and a similar stance for their $10 billion investment in Anthropic.

- The shift in AI companies' needs from training to inference is pressuring Nvidia to develop new chips specifically for inference.

- OpenAI is expected to be a major customer of Nvidia's new inference-optimized chips, aligning with OpenAI's investments in inference-optimized chips from Amazon and Google.

The Cover Drive Heard 'Round the AI World

Well, folks, it seems even in the high-stakes game of AI, there's a time to consolidate and strategize. As Virat Kohli, I know a thing or two about that balancing aggression with calculated moves. So, when Jensen Huang, the CEO of Nvidia, suggests their $30 billion investment in OpenAI might be their last before a possible IPO, it's like hearing Sachin Tendulkar declare he might not hit another cover drive – you sit up and take notice. It's a bold statement, signaling a potential shift in the game plan. "You can't always be aggressive; sometimes, you need to respect the bowler," as I always say.

The Hundred Billion Dollar Dream on Ice

Remember that buzz about Nvidia potentially investing $100 billion in OpenAI that was a talk of the tech world, like my century at the Eden Gardens Well, Huang seems to be pumping the brakes on that idea. According to sources, that deal is seemingly "not in the cards." That's quite the declaration. In the world of AI, where billions are thrown around like sixes in the last over, this is a significant shift. Speaking of bold moves, have you heard about Apple's Bold Move Shifting Mac Mini Production to US Soil That's the kind of strategic play that reshapes the landscape, much like a well-timed declaration of intent. "Intent is everything," remember that.

From Training Ground to Inference Arena

The needs of AI companies are evolving, and Nvidia, like a seasoned cricketer adjusting to a changing pitch, is adapting. The focus is shifting from training AI models to inference – the ability to rapidly respond to user queries. This is where Nvidia's expertise shines. "Adaptability is the key to success," I've always maintained, and it's true in cricket, business, and apparently, artificial intelligence. Nvidia's reportedly developing a new chip specifically for inference.

OpenAI The Star Client

OpenAI is expected to be one of the largest customers of Nvidia's new inference processor. In February, OpenAI announced it would sign up for a major purchase of "dedicated inference capacity" from Nvidia. It's a testament to Nvidia's prowess in the AI hardware space. But let's not forget that OpenAI has also invested heavily in inference-optimized chips from Amazon and Google. It's like building a diverse batting lineup, ensuring you're covered from all angles.

The Altman Angle

OpenAI CEO Sam Altman will be speaking at the Morgan Stanley conference, according to sources. It's an opportunity to get a deeper understanding of OpenAI's strategy and future plans. In the world of cricket, we'd call it a post-match analysis, a chance to dissect the game and learn from it. One thing is sure, the partnership between OpenAI and Nvidia is really something. It is one the partnerships to look out for.

The Final Word on AI Partnerships and Investments

So, what does all this mean? It suggests a maturing AI market where strategic partnerships and targeted investments are becoming the norm. The days of endless funding rounds might be numbered, and companies are focusing on profitability and sustainable growth. As for Nvidia, they're playing the long game, ensuring they remain a dominant force in the AI landscape, whether through direct investments or strategic partnerships. "Play with passion, play with purpose," and always keep an eye on the scoreboard, because in the end, it's all about winning the game.

jiggyboy26

Nvidia is indeed adapting to market demands, it's a testament to its leadership.

mindela

It sounds like OpenAI is hedging its bets with multiple chip providers.