- Nvidia's licensing of Groq's technology for $20 billion aims to enhance its AI inference capabilities.

- The acquisition underscores the growing importance of inference in the AI computing market, a field where Nvidia seeks to strengthen its position.

- Groq's unique LPU design, prioritizing speed and efficiency, complements Nvidia's GPU strengths.

- The upcoming GTC event is expected to reveal Nvidia's vision for integrating Groq's technology and its impact on the AI industry.

Nvidia's Christmas Miracle A Groq-tastic Acquisition

Alright, Morty, listen up. It seems Nvidia, those number-crunching nerds, decided to drop a cool $20 billion on Groq right before Christmas. Twenty BILLION, Morty. That's, like, enough for a lifetime supply of Szechuan sauce, if they hadn't discontinued it because of my antics. Anyway, this Groq company makes chips, specifically LPUs, Language Processing Units for AI inference which is apparently the hot new thing. What's inference Morty You wouldn't get it. Basically it's about making AIs actually *use* the knowledge after they are trained. So they bought some people from Groq including their CEO Jonathan Ross.

GTC The Super Bowl of AI Or Just Another Corporate Shindig

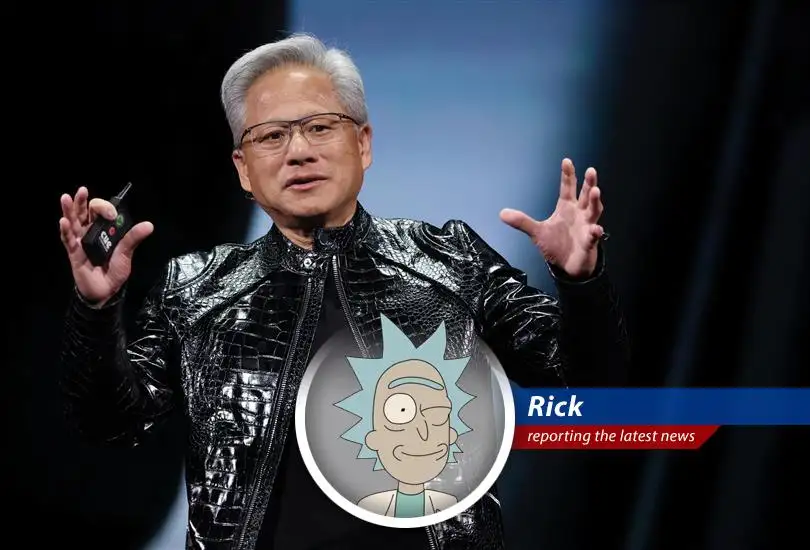

So, Nvidia's throwing this big party called GTC, or GPU Technology Conference. They are so creative naming stuff it hurts my brain, Morty. It's like the Super Bowl but for AI nerds. Jensen Huang, Nvidia's leather-clad CEO, he's gonna be there, probably saying buzzwords and promising the moon. Apparently, they are planning on showing off how they're gonna shove Groq's tech into their AI ecosystem. This could be huge Morty or it could just be a giant waste of time, like your attempts to be cool. Remember, Nvidia has a history of successful acquisitions, like Mellanox and understanding their approach and strategy is important. If you're interested in the details, you can read more about similar events in Operation Metro Surge Winds Down: A Measured Response.

The Inference Game Why Nvidia's Playing to Win

Inference is where the money's at, Morty. Training AI models is cool and all, but inference is how these things actually get used. And it turns out, it's a crowded market. AMD's trying to muscle in, and even those tech giants like Meta are building their own chips. Google's TPUs are giving Nvidia a run for their money, too. It is basically the wild west here, so you got to have the best gear if you want to prevail in this game, Morty. They're all competing for a slice of the pie.

Groq's Secret Sauce SRAM vs. HBM

Here's where it gets technical Morty. Groq uses this thing called SRAM, a type of memory that's super-fast. Nvidia's GPUs use HBM. Now, SRAM is right on the chip, so it is even faster. What matters to you Morty is that it means that Groq is quick. They are so quick, they are trying to get you to believe their LPU chips are better than GPUs. Which is probably just marketing BS but they are worth something to Nvidia, clearly.

Nitro Boost GPUs Groq's Contribution

This Jonathan Ross guy, the ex-CEO of Groq, said something interesting, Morty. He thinks Groq's LPUs can actually *help* Nvidia's GPUs. They are apparently so fast that they can be used to 'nitro boost' existing GPU setups. I will be honest Morty I don't know how truthful that is. I don't trust anyone. But you never know. It might actually work.

Nvidia's Endgame World Domination or Just More Money

Alright, Morty, the big question is, what's Nvidia up to They seem to be thinking that buying up Groq will be like when they bought Mellanox. Remember Mellanox Morty they made the networking hardware. It turned Nvidia into a one-stop shop for AI. Now they are hoping Groq will bring them even more wealth. We will see Morty we will see. The future is uncertain but one thing is sure Nvidia is going to try to make a lot of money.

orchid

Will Groq's technology actually improve Nvidia's performance

Chevron

This acquisition could be a game-changer for AI inference.